We recently wrote an article that was published in Industrial Photonics magazine describing our novel navigation technology. You can check out the article online here or read it below.

Robots With Laser and Vision Systems Conquer New Industrial Terrain

SPENCER ALLEN, AETHON INC.

Navigation based on reflectors has limitations due to objects blocking their placement. Courtesy of Aethon.

Lidar is a distance measurement method that uses light in the form of a pulsed laser and a highly sensitive detector to determine distance using time-of-flight or phase-shift measurements for very short distance values. When combined with mechanisms to sweep the laser in a plane or other pattern, users get an array of distance readings at consecutive angles and/or directions. From this 2D or 3D range data, users can convert from radial coordinates to cartesian coordinates and create a 2D or 3D contour map of the scene as a result.

Lidar is uniquely qualified for scanning at very high rates because of the speed of light and the fact that it carries its own directed illumination in the form of laser light. In addition, compared to structured light techniques like stereo vision or triangulation, lidar can read very long distances without the need for a large base line between cameras or emitter/detector pairs, which can be important on smaller vehicles.

Some lidars have the additional capability to specifically detect reflectors in the laser scan, providing a low computationally intensive triangulation system for localization. However, using reflectors and triangulation has the complication of needing to see three or more reflectors at any point during the AGV’s travel, which is sometimes a difficult requirement when the operating space is divided by shelving, machinery and other equipment.

Autonomous mobile robots

With the worldwide focus on Industry 4.0 initiatives and manufacturers looking to reduce cost and increase flexibility and productivity, the successor to AGV technology has emerged in the form of autonomous mobile robots (AMRs). These AMR systems are to AGV systems what collaborative robots of the last few years are to industrial robots. Free from wires, physical guidance and added infrastructure, such as reflectors, AMR systems transport materials and supplies not only within traditional manufacturing boundaries but between them. Traveling through tunnels and hallways, between and inside buildings, and riding elevators to access multiple floors, AMRs are as comfortable dodging a forklift as they are deftly negotiating narrow passageways and human walking traffic.

An autonomous mobile robot navigates around an obstacle. Courtesy of Aethon.

Real-world autonomous navigation

To achieve this feat, AMRs must be able to localize within their far-reaching boundaries, as well as plan pathways through congested areas that may look very different every time the AMR passes through. In this environment, especially working in and among persons, obstacle detection and avoidance are of paramount importance. Fortunately the same sensors that give the AMR the capability of localizing itself are able to detect and report the occurrence and location of obstacles that the AMR must negotiate. The recent changes in power and cost-effectiveness of these sensors, typically lidar, makes AMRs feasible and has ushered in a wave of entrants into what is a rapidly growing product segment. Competition and the shrinking electronics have made a typical lidar used in mobile applications go from more than $10,000 and the size of a basketball a decade ago, to comparative models today at sub-$2,000 cost and as small as a baseball. Higher scan rates and more angular resolution provide ever more data feeding hungry algorithms used for modern localization algorithms.

Simultaneous localization and mapping

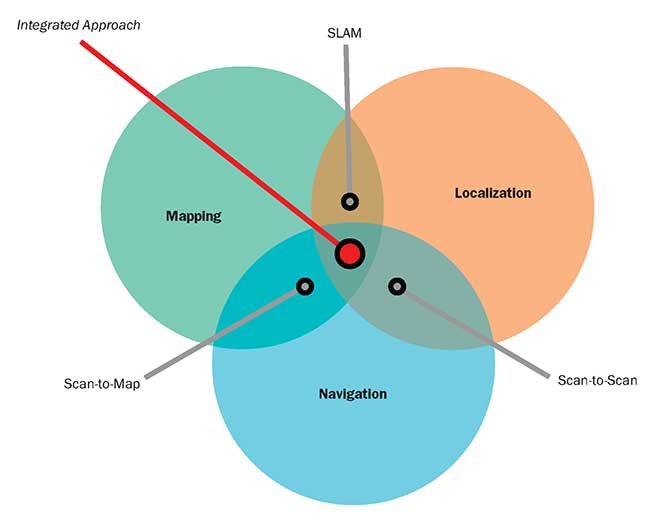

How does an AMR using lidar localize itself in large and small spaces, through tunnels and halls, in multiple buildings and on multiple floors, without reflectors or markers? AMRs use a technology known as simultaneous localization and mapping (SLAM), pioneered in the academic labs of the mid-1980s and 1990s, solving the problem of creating a map of an unknown environment while simultaneously maintaining a location within that map. SLAM algorithms remain an active research area with various versions of them being widely available to researchers and commercial users alike in, for instance, the open-source Robot Operating System (ROS) libraries, Carnegie Mellon University’s Robot Navigation Toolkit (CARMEN) and the Mobile Robot Programming Toolkit (MRPT), to name a few.

SLAM can use many sensors as input, with lidar being the most common and readily available at present. It often uses several different types of sensor inputs, with algorithms tailored to the powers and limits of the various sensor types.

Each sensor has associated with it an algorithm for determining motion or position from that input. For example, odometry, or wheel revolutions, is used with the mathematical kinematics of the mobile robot base, to calculate movement based on wheel rotation. Sonar or ultrasonic sensors can estimate position based on standoff distances to local infrastructure. And lidar range scans can be used in several ways to estimate mobile robot motion or position.

As an iterative inference problem, SLAM starts with a known condition, being the location and pose of the AMR, a modeled prediction of a future condition, being the location and pose estimate based on current speed and heading, and sensor data from multiple sources with estimates of error and noise quantities. SLAM uses statistical techniques, including Kalman or particle filters, to approximate a solution to the robot’s location and pose iteratively.

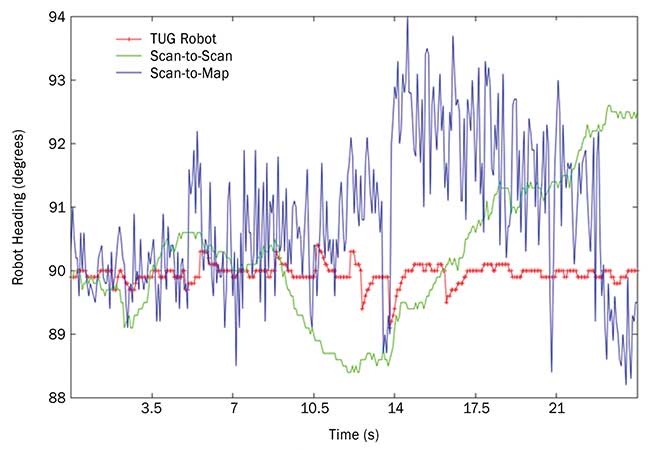

The chart illustrates the results of the extended Kalman filter. Shown is the output of a trip made by the TUG autonomous mobile robot from Aethon down an aisle at a manufacturing plant. The aisle is aligned with the angle of 90 degrees. The scan-to-scan algorithm (green), while relatively smooth, can drift off (as seen in the second half of the plot) due to accumulated errors. The scan-to-map algorithm (blue) gives noisy data whose mean may jump. These variations are due to mismatches between the map and the current environment due to material being moved. The red line is the estimate of the TUG’s location calculated by combining all localization techniques through use of an extended Kalman filter. The jumps are caused by the use of the absolute positioning algorithms. Courtesy of Aethon.

Incorporating an extended Kalman filter

In a typical implementation of SLAM, an extended Kalman filter is used to estimate, through a probability density function, the state of the system. This estimation includes the AMR’s position and orientation, its linear and rotational velocities and linear accelerations. The filter proceeds in a two-step fashion of prediction and correction. In the first step, the estimated state for the current time is predicted from the previous state and only the physical laws of motion. In the second step, observations from sensor readings are used to make corrections to some of the estimated states. This is where the readings from external sensors, including lidar, provide their inputs. The observations include an uncertainty value and the filter attempts to maintain a set of states, represented by a mean and covariance matrix, consistent with the laws of motion and the observations provided. Two common SLAM algorithms for the correction step using lidar range data are scan-to-scan matching and scan-to-map matching.

The integrated approach to autonomous mobile robot (AMR) navigation. Courtesy of Aethon.

Scan-to-scan matching

In scan-to-scan matching, sequential lidar range data is used to estimate the position movement of the AMR between scans, resulting in an updated and accumulated location and pose of the AMR. This algorithm is independent of an existing map, so it is heavily relied upon when a map does not exist, such as during initial map creation, or when the current environment does not closely match the stored map because of changes in the environment. As an incremental algorithm, scan-to-scan matching is subject to long-term drift and has no means to correct inaccurate updates over time.

Scan-to-map matching

In scan-to-map matching, lidar scan range data is used to estimate the position of the AMR by matching readings directly to the stored map. This can be done on a purely point-by-point basis, or a more robust but computationally costly method of matching the readings radially against the first object encountered in the map. As an absolute algorithm, scan-to-map matching is not typically subject to drift as is the case with scan-to-scan matching. However, it is subject to other errors caused by repetition in the environment, where the map looks very similar from different locations or orientations. In addition, when the current environment does not closely match the stored map, incorrect matching can cause erroneous discontinuous changes in position. And, once it is far out of position, scan-to-map matching can have a difficult time getting back on track.

Overcoming limitations — an integrated approach

All SLAM algorithms are ultimately based on sensor readings of the environment. When no objects are in a suitable placement to be read by the sensors, as seen with an empty shelf in a warehouse and a 2D lidar at that exact height, the introduction of 3D lidar or 3D stereo depth camera-based sensing can greatly add to localization performance. However, these sensors come at a potentially higher cost and with a significantly higher computational requirement. One way to reduce computational requirements when presented with so much data is to extract features from the scan or image that can then be processed by the SLAM algorithms, so the SLAM algorithms do not deal with each individual pixel in an image.

This strategy requires a robust algorithm to extract features consistently from scan to scan, in spite of the difference in viewing angle, lighting or reflectivity, when in motion passing through a scene. This is no small task. In addition, applications requiring travel out of doors, or between inside and outside, can place additional requirements on sensors and their ability to handle changes in light/dark, direct sunlight and more.

Most SLAM implementations in use today by AMRs are compromises in capability based on sensor cost and computational requirements, as well as the power requirements of increased computation in mobile vehicles. As more functionality is being integrated into high-end machine vision cameras to support the kinds of applications in which they are used — inspection, measurement and defect detection — perhaps the needs of SLAM-based algorithms can also be integrated directly into machine vision cameras of the future. Such integration could provide feature extraction and ranging, for example, thereby offloading the processing requirements of the AMR.

While none of these SLAM algorithms are, on their own, completely satisfactory and, in fact, can give contradictory location solutions, each has strengths in different situations. Through a combination of odometry, scan-to-scan matching, scan-to-map matching and additional techniques, such as feature extraction and matching, the use of each of these methods in tandem with one another can overcome the shortcomings to provide accurate and reliable performance in real-world settings and applications.

Meet the author

Spencer Allen is the chief technical officer of Aethon Inc. in Pittsburgh, where he directs development of the TUG autonomous mobile robot, transporting materials and supplies in hospitals and manufacturing environments; email: sallen@aethon.com.